Physics World

Jessica Dempsey takes up post as head of the Square Kilometre Array Observatory

SKAO is set to become the world’s largest and most sensitive radio telescope

The post Jessica Dempsey takes up post as head of the Square Kilometre Array Observatory appeared first on Physics World.

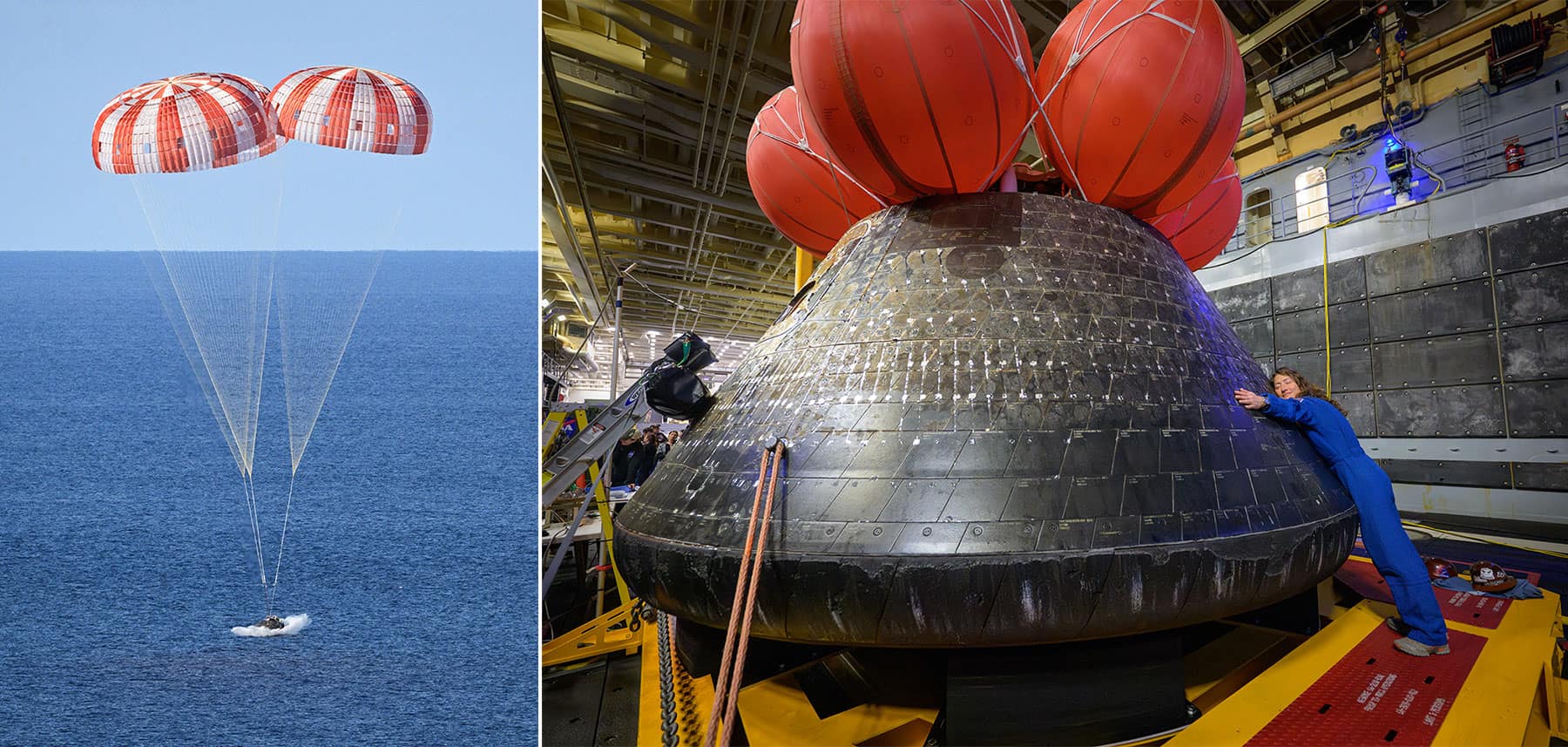

Astronomer Jessica Dempsey has become director-general of the Square Kilometre Array Observatory (SKAO), which will be the world’s largest and most sensitive radio telescope when it opens next year. Dempsey will now serve a five-year term as director-general and succeeds Philip Diamond, who held the role since 2012.

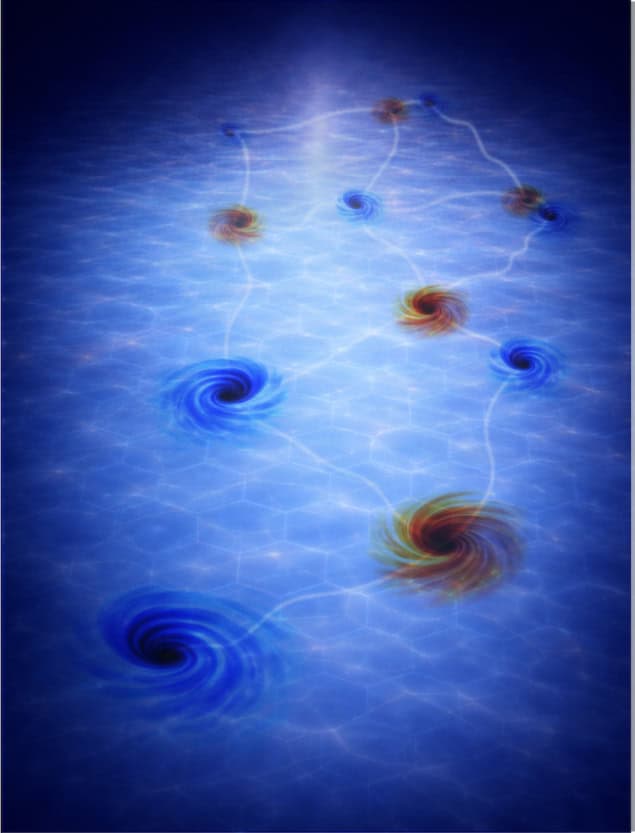

Three decades in the making, the SKAO is based in South Africa and Australia and consists of 197 dishes and 131 072 antennas to study how galaxies form, the nature of dark matter, and whether life exists on other planets.

The Australian side, known as SKA-Low, will focus on low-frequency obervations, while South Africa’s SKA-Mid will observe mid-range frequencies.

The headquarters of the organization is based in the UK at Jodrell Bank and SKAO has 13 full members, which includes China, Germany and India.

From film star to the stars

Dempsey studied both astrophysics and theatre and film science as an undergraduate at the University of New South Wales before becoming an actor in the late 1990s.

Dempsey then did a PhD in astronomy at UNSW, graduating in 2007 before working at the James Clerk Maxwell Telescope at Mauna Kea Observatory in Hawaii, becoming operations manager in 2012 and then deputy director of the telescope in 2016.

In 2022 Dempsey became director of the Netherlands Institute for Radio Astronomy and throughout her career has championed more equitable opportunity and experience for women and all underrepresented individuals in science, in 2023 becoming professor of ethics in astronomy at Radboud University.

Dempsey says it is “humbling” to lead the organization and is “passionately dedicated” to its success.

SKAO is currently preparing for the start of “science verification”, in which astronomers will gain access to the first SKAO data. This is due to begin for the SKA-Low telescope in Australia in the second half of 2027.

“As someone who loves nothing more than building and running telescopes, there is not a better time to be asked to take up this role – we are just getting to the cool stuff,” adds Dempsey. “This is a daring project, unprecedented in scale and scope, and it will need the skills of every single team member on three continents and all the support of our broad global partnership to see it come to light.”

Diamond, meanwhile, noted that the observatory is in “very good hands” with Dempsey’s appointment.

“This is a demanding role, with the need to balance scientific, political, diplomatic, financial and many other considerations,” adds Diamond. “I have full faith in [Dempsey’s] ability to lead this extraordinary organization through its next chapter.”

The post Jessica Dempsey takes up post as head of the Square Kilometre Array Observatory appeared first on Physics World.

What physicists can do to support the green economy

Matin Durrani summarizes a debate at the Institute of Physics on the future of green energy

The post What physicists can do to support the green economy appeared first on Physics World.

From heatwaves to extreme rainfall, the impact of climate change is rapidly becoming a reality in our daily lives and a danger to our planet. But physicists are in a great position to help, with physics-based research bringing about practical, real-world solutions, whether it’s more efficient solar cells, better climate models, or novel materials for capturing carbon dioxide from the atmosphere.

There are huge economic and commercial benefits from such work too. A 2023 report from the Institute of Physics (IOP), entitled Physics Powering the Green Economy, estimates there are almost 1800 companies in the UK and Ireland taking green technologies to market with a combined turnover of £750bn.

Last year a follow-up IOP Impact report entitled Unleashing Physics to Power the UK Energy Sector identified the most promising physics technologies for transforming the UK’s energy system. These fall into three main areas: energy generation (nuclear power, photovoltaics), storage (batteries) and transmission (high-temperature superconductors).

The clean-energy revolution will not be easy, however. As the IOP report points out, the UK has a strong research base, good international collaborations, and a growing pipeline of spin-out and early-stage companies. But the country doesn’t invest enough in technology scale-up facilities, faces critical skill shortages, and isn’t great at recycling either.

To discuss how physicists are supporting the green economy – and what more they can do – a panel debate was recently held at the IOP in central London. Attended by Prince Edward, the Duke of Edinburgh, as well as about 100 business leaders, policy chiefs, senior physicists, and IOP and IOP Publishing staff, it was chaired by Tara Shears, the IOP’s vice-president for science and innovation.

The panel featured ex-BP boss John Browne, who now works in green energy, Emily Nurse from the UK’s Climate Change Committee, former Sizewell C energy-strategy director David Cole, solar-cell physicist Jenny Nelson from Imperial College, and Nellie Technologies founder Stephen Milburn. The following is an edited extract of the discussion.

Physicists for a greener future

John Browne is chair of BeyondNetZero, a climate-growth equity venture firm. He was group chief executive of energy giant BP from 1995–2007, having joined the firm in 1966 after studying natural sciences.

Emily Nurse, who was originally an experimental particle physicist, is the director of net zero at the UK’s Climate Change Committee, which advises the UK government on reducing emissions and adapting to the impacts of climate change.

David Cole, an engineer by training, was at the time of the discussion director of energy strategy at the Sizewell C nuclear-power plant, which is being built in Suffolk in the UK. When complete, it is expected to meet up to 7% of the UK’s total electricity demand. He is now executive president, consulting, at energy firm Wood.

Jenny Nelson is a physicist at Imperial College London, where she has spent almost 30 years developing advanced materials for photovoltaic solar cells. She is also mitigation programme lead at the Grantham Institute of Climate Change and the Environment.

Stephen Milburn is a physicist who is founder and chief executive of the firm Nellie Technologies in South Wales. It removes carbon dioxide form the atmosphere using biomass, which can then be used as animal feed or construction material.

What role are physicists currently playing in our quest for a greener economy?

John Browne: I made a wonderful decision 60 years ago, when I was 18, which was to read physics. After graduating, I became an engineer, but over the last 30 years physics has come back in to my life as I’ve found myself doing something very important – trying to get to net zero. Physics, you see, touches absolutely everything.

All that I’ve ever done – whether it’s renewable energy or “old energy” [fossil fuels] in my old life – starts with physics. Whether you’re involved in chemistry, biology, electronics or engineering, it could not exist without a much deeper understanding of physics. We have to make sure everybody knows that – but I don’t think people currently do. They tend to think engineering is the only enabler for commercialization, but physics is there.

Emily Nurse: I started out as a particle physicist working at CERN on the Large Hadron Collider but for the last four years, I’ve been involved in climate policy and now work with the UK’s Climate Change Committee. We are the UK government’s official advisers on its climate targets – and assessing progress towards meeting those targets. As we celebrate global decarbonization to date, we need to remember it’s all underpinned by physics.

Take the rise of solar power for example, which has been the fastest growing source of global electricity generation for the last 20 years in a row. Solar installations in 2024 were double those in 2022. Along with wind, solar has led to a reduction in electricity from fossil fuels. We’re seeing the costs of solar plummeting and they just keep falling further.

In the UK, solar power has been growing more slowly, but it’s starting to pick up and is going to be a really important part of the electricity mix. We’ve also got a lot of wind here in the UK – it’s a very windy island after all. I would also like to give a shout out to heat pumps: as a physicist, how can you not love their efficiency?

David Cole: I am an engineer, not a physicist, but I’ve spent my career in lots of different sectors and been fascinated with the role that energy plays in creating a better society. What’s really interesting at Sizewell C is the ownership structure, which involves both state and private investment. It’s the first time private investment has been used for a new nuclear build in the UK.

I hope it leads to a virtuous circle, in which the more plants we build, the more we can reap from that investment

David Cole

Getting this hybrid financial structure over the line was not trivial – it took a lot of effort – but I think it will drive great performance. We’re also trying to use as much UK content in the plants as possible, whether that’s materials, skills or technology. I hope it leads to a virtuous circle, in which the more plants we build, the more we can reap from that investment. Sizewell C will, in other words, bring down energy costs, which is fundamental to economic growth.

Jenny Nelson: I have been active in research into solar photovoltaic (PV) materials and devices for over 30 years and we should celebrate how much has happened in the field during that time. In the last 10 years, we have seen capacity increase globally by more than a factor of 10, we’ve seen the efficiency of solar cells increase, and we’ve seen the cost come down almost by a factor of eight, all of which is remarkable.

Those innovations are firmly rooted in physics – whether it’s changes in device structure… or of the optical properties of materials

Jenny Nelson

The cheapest form of electricity globally, in other words, is now from solar PV, which was not the case 30 years ago. These developments have come partly from economies of scale and partly from technological innovations that have now fed through into production. Those innovations are firmly rooted in physics – whether it’s changes in device structure due to our understanding of semiconductor physics or new developments in the optical properties of materials.

The next generation of PV cells, which are likely to be silicon-based tandem devices, will also depend on scientific breakthroughs and innovations.

Stephen Milburn: I’m chief executive of Nellie Technologies, which is based in South Wales on the site of a former chemical-weapons storage facility. We’re using biomass waste for removing atmospheric carbon dioxide, and if you visit us, you’ll see all kinds of activity: in one corner there’s chemistry, in another engineering and in the next there’s biology and biochemistry. But physics is at the heart of the technology. Physicists are a bit arrogant when we say we think we can do everything, but the fact is we probably can.

But we should also celebrate the work that has gone on to create a market in which carbon-emission credits can be bought and sold. Trading carbon credits has been a bit of a dark activity over the last 10 years, with double counting and bad things happening purely by firms wishing to make a profit. However, the market does have the power to regulate itself – in fact the alignment we’re starting to see between the UK and the EU will help greatly.

What are the biggest growth opportunities for the green-economy sector?

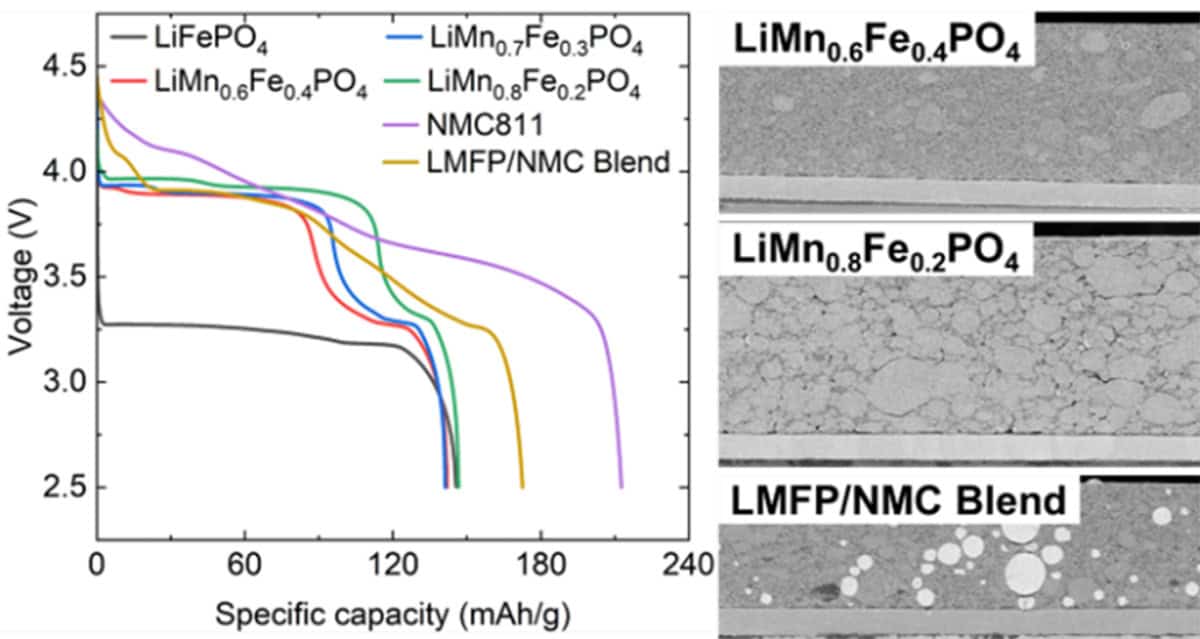

John Browne: First, we can do much more with what we’ve already got – for example we could increase our offshore wind or rethink whether we should go back into onshore wind. Second, we can improve what we’re doing – for instance, by increasing the efficiency of solar panels to their theoretical maximum, which would make rooftop solar economically attractive. Third, there are new opportunities, such as metallic organic frameworks and nuclear fusion.

What we do here in the UK needs to move the needle globally, which means thinking about how to scale and finance it properly

John Browne

However, the UK needs to avoid doing things that others are doing much better. The race for the best battery in the world is, for example, probably going to be won elsewhere. What we do here in the UK needs to move the needle globally, which means thinking about how to scale and finance it properly. The UK shouldn’t end up as a secondary player.

Emily Nurse: The UK has made a lot of progress in our quest to reach net zero by 2050. Since 1990, for example, we’ve halved our carbon emissions, mainly by decarbonizing electricity – phasing out coal, reducing gas generation, while significantly increasing wind, solar and other renewables. Electricity generation now accounts for only around 7% of UK emissions, which are dominated by transport (cars and vans) and heating (oil and gas boilers).

Reducing emissions still further will predominantly come from moving to electric technologies, including electric vehicles and heat pumps, and by further decarbonizing the electricity supply. There will be a backbone of wind and solar, but to ensure a secure supply, we’ll need nuclear, carbon capture and storage, hydrogen and batteries. We’ll have to reduce emissions from agriculture and land use too.

A report from the Confederation of British Industry (CBI) last year estimated that the net-zero economy grew by 10% in 2024, which is three times faster than the rest of the UK. But we’ll need more innovations to continue to bring costs down – and we’ll also need to provide incentives to boost the take-up of electric technologies. If we do that, there’ll be an overall saving to the UK economy in about 15 years’ time, our analysis suggests. There are huge opportunities for green growth to come from this investment.

David Cole: I agree that for the UK to be competitive, the cost of energy has to come down – not just for domestic customers but businesses too. In fact, there are two main opportunities First, we have to adopt a “whole-systems” approach. If we’re building a power station, for example, can we use every bit to its maximum potential?

Let’s say I’m running a direct air-capture plant operating at 25–30 ºC – can I use the waste flow from my coolant system to encourage new industries? Can it support nearby hydrogen generation plants or companies making, say, synthetic aviation fuel? Those questions involve thinking about physics and engineering as well as materials science, which is also super important.

Whichever way you look at it, we’re talking about building a lot of hardware, which involves materials. How much energy per unit mass are they using? Can we recycle those materials? What can we do with the waste products? Ultimately, what is really important is energy security: where does your energy come from, who made it and what impact does it have on the environment?

Jenny Nelson: The net-zero economy is growing significantly faster than the rest of the economy and I think that will continue. But decarbonizing the power sector only addresses part of the problem and we’re going to see a big transition across the rest of industry, agriculture and elsewhere that will generate a wide range of opportunities and stimulate the economy too. I’m not just talking about rolling out more renewables, but about integration – bringing together the generation and storage of energy, ensuring that we are managing demands and have the right infrastructure.

As for my area of photovoltaics, we’ve seen great ideas and technologies come out of the UK that are very likely going to be developed outside the UK because the manufacturing capacity isn’t here. Nevertheless, those ideas and innovations can still benefit the country through licensing, partial manufacturing and new technology.

One thing to remember about solar power is it’s distributed. You can have solar generation without being connected to the grid. That not only opens some markets for certain applications where you want to generate electricity locally, but it also provides a route to energy security through back-up generation, towards which solar power will be an important part.

Stephen Milburn: Having a strong green-technology manufacturing base is a huge opportunity for the UK. My company is based in South Wales, where we have lots of highly skilled people who used to work in traditional industries but now don’t have many places to go. Yes, there’s a fantastic semiconductor industry here, but when it comes to deploying green technology we cannot outsource that responsibility to other parts of the world.

Green tech needs to be deployed in the UK’s industrial heartlands… if we don’t nurture jobs and skills here there’s a real risk they will be gone forever

Stephen Milburn

Green tech needs to be deployed in the UK’s industrial heartlands to take advantage of the skills we already have, but which we are at risk of losing. In fact, if we don’t nurture those jobs and skills here there’s a real risk they will be gone forever. Having a strong green-technology manufacturing base is a huge opportunity for the UK.

What needs to happen so that these opportunities can be put into practice?

Stephen Milburn: Many science graduates leave university equipped with solid academic rigour and a great scientific understanding, but they often lack practical green-technology skills. This summer my company is therefore hoping to launch a climate apprenticeship programme, which will allow graduates to pick up those skills. We need to build green-tech skills in the real economy, in particular those that will deal with climate change.

Jenny Nelson: The UK must do more to support its own innovations. We need better regulations to avoid unnecessary bottlenecks. We need to invest in infrastructure like the grid. We should completely avoid subsidizing fossil fuels and instead divert any subsidies into alternative economies. Finally, we need to train and educate people, showing the public the potential of green technology so that they become part of the transition, for example by generating their own electricity.

David Cole: We need to integrate our policies on industry, energy, land use and AI so that we can invest in them all as growth areas. In particular, I’d like to see a long-term nuclear programme in which we build a fleet of new reactors all of the same design, which will drive down costs by letting us replicate a particular technology. It’s also vital that we get a high proportion of UK content and technology into these reactors, which will lead to a virtuous circle, with money coming back into the economy that we can re-invest in industrial and academic partnerships.

Emily Nurse: What’s vital is consistency in policies; we need certainty. In the UK, we are fortunate to have world-leading climate legislation in the form of the 2008 Climate Change Act, which does not just make it a legal requirement to reach net zero by 2050 but also gives us targets along the way. It means we know what we need to do in both the medium- and long-term, which gives certainty to investors, businesses, innovators and consumers.

What’s really important is communication – supporting communities through the transition and making sure they realize the benefits

Emily Nurse

So the first thing we need to do is keep the Climate Change Act. Then, of course, we’ve got to address barriers to delivery, including having the right incentives to electrify the economy. And what’s really important is communication – supporting communities through the transition and making sure they realize the benefits, not just in terms of reducing carbon production but of having cleaner, better and more efficient technologies too.

John Browne: First, we must never stop investing in people who can discover things and translate them into real commercial products. Second, we need to understand how to scale things, which means focusing on the winners and getting rid of things that are “nice to have” but aren’t going anywhere. That’s not easy because you have to push people to say, “You’ve done great work, but you’ll have to stop”.

What’s more, to scale new technology, people have to learn what it takes. When I’m in the US, I often speak to chief executives who can explain their technology to the financier who’s supporting it, whereas here in the UK that often doesn’t happen.

Third, we need to maintain confidence in what we’re doing. I often talk to people who think that it’ll be really expensive to get to net zero, but in fact estimates suggest that each household would only have to spend an average of about £150 a year to get there. So it’ll be less than the cost of a TV licence to get to net zero.

Of course the investment needed will be “lumpy” – it’s not as simple as just levying a fee – but the point about governments is that they can smooth things out. That is what they have done in the past and it’s what they should continue to do.

- For more information about the IOP’s work on the green economy, click here. You can also keep up to date with the all latest research in the field through IOP Publishing’s series of open-access Environmental Research Journals at this link.

The post What physicists can do to support the green economy appeared first on Physics World.

Photonic glasses deliver angle-independent structural colour, including reds

Gold nanoparticles absorb blue light

The post Photonic glasses deliver angle-independent structural colour, including reds appeared first on Physics World.

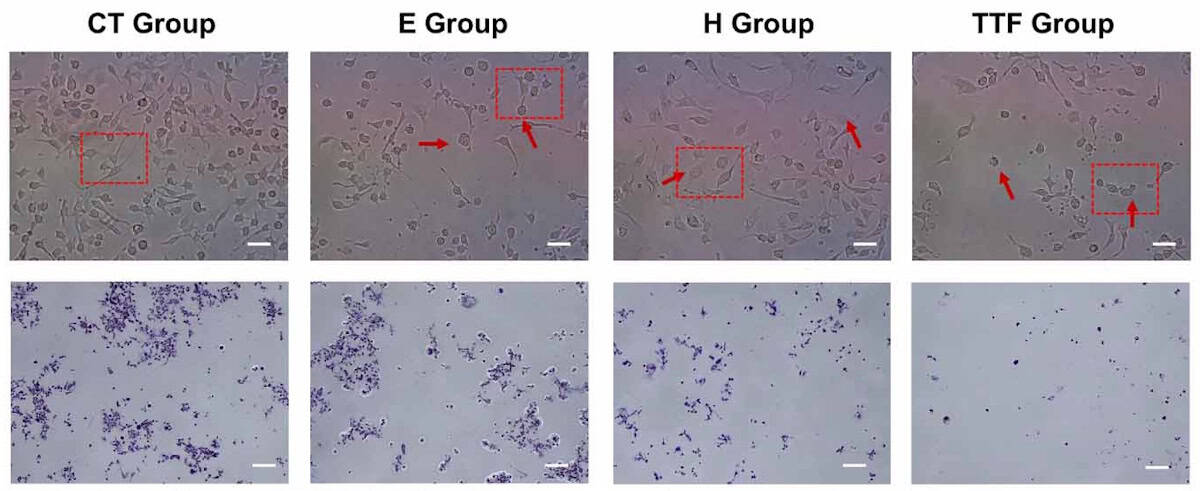

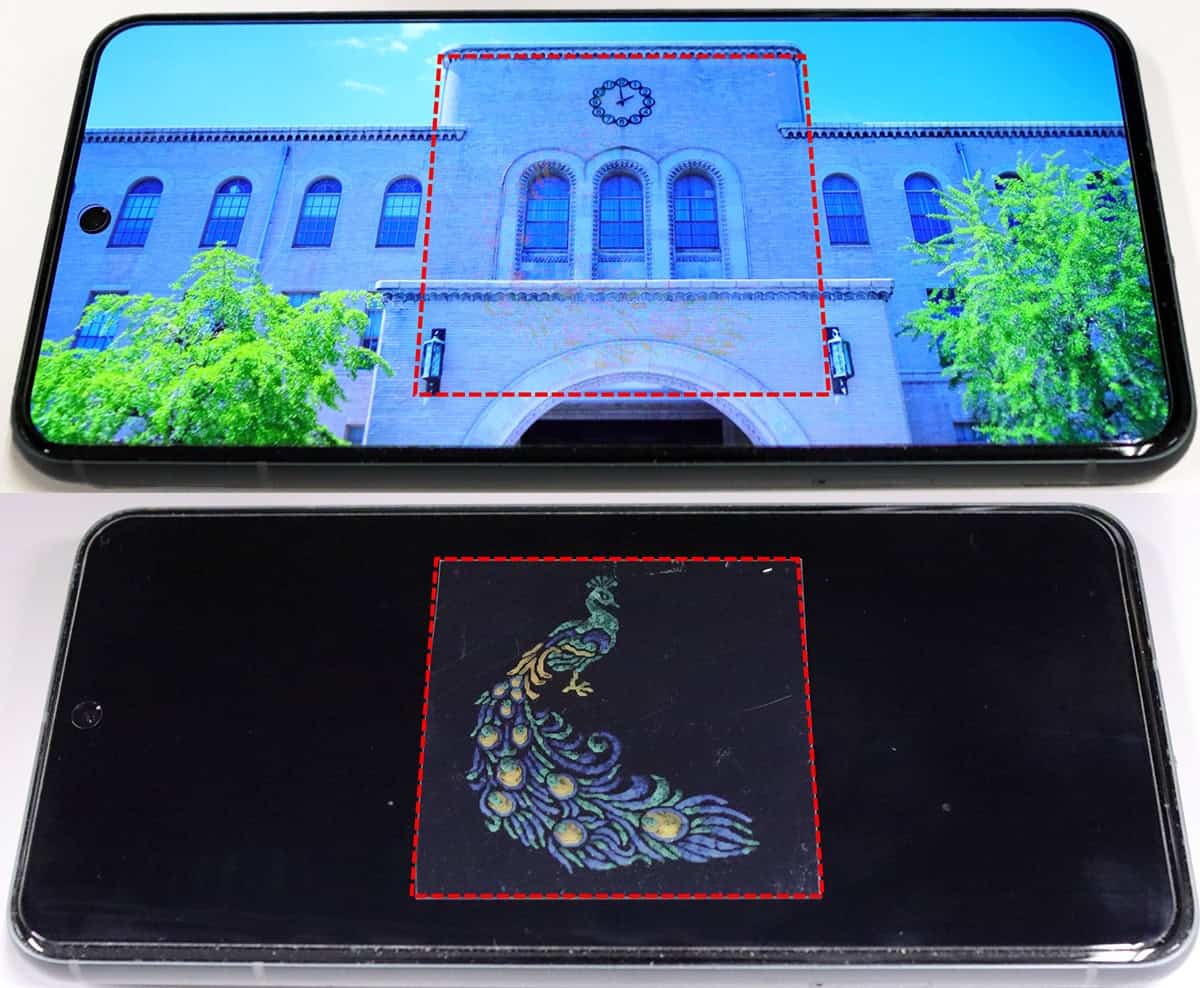

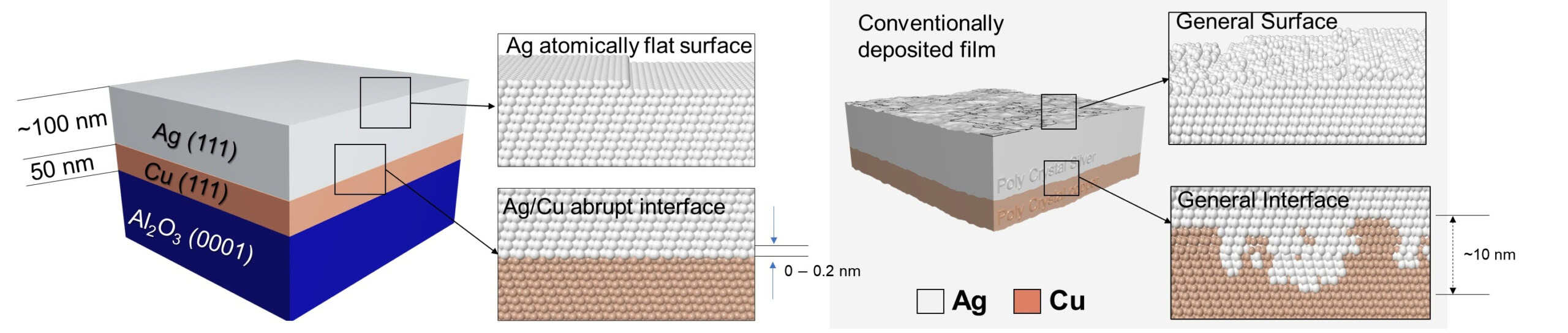

Photonic glasses containing gold-cored, silica-shelled nanoparticles can produce high-purity colours across the visible spectrum. Crucially, the colours are independent of viewing angle. Developed by researchers in Korea, their design avoids the short-wavelength scattering that has prevented the attainment of a true red – and blurred other colours – in previous photonic glasses.

Synthetic materials are usually coloured using pigments, such as those found in dyes or paints. A pigment has a chemical composition that causes it to reflect light at certain wavelengths and absorb light at other wavelengths. Nature, however, makes widespread use of structural colour, whereby the physical structure of a material dictates which wavelengths are reflected and which are absorbed. A familiar example is iridescence, which is responsible for rainbow-like colours on some plants and animals.

Creating colour using structure rather than chemistry has several advantages. One is that there are no chemical chromophores to be bleached by sunlight, so the colour tends to be more durable. Another benefit is that there is no dye to leach if the material comes into contact with water or another solvent.

While structural colour can be created using traditional photonic crystals, these can be tricky to produce controllably. Moreover, a surface that relies on interference effects is inevitably iridescent – which means that its colour changes with the viewing angle.

Short-range order

One solution is colloidal photonic glasses, which are not physically textured but have particles such as silica or polymers dispersed throughout them with short-range order. These can be produced simply by solution processing, and their colour does not vary with viewing angle. The principal problem with these glasses is the attainment of colour purity – especially in the red. The challenge is that the glasses scatter light more effectively at shorter (bluer) wavelengths owing to Rayleigh scattering. This effect makes the sky appear blue and adds unwanted blue light to structural colour.

In the new work, nanophotonics expert Seungwoo Lee of Korea University in Seoul and colleagues synthesized 230 nm core–shell nanoparticles in which silica surrounds a 20 nm gold cluster. This has a plasmonic resonance that absorbs shorter wavelengths. The researchers then dispersed the nanoparticles in ethoxylated trimethylolpropane triacrylate. This is a photocurable resin that has a very similar refractive index as the nanoparticles. The resin was applied to surfaces by painting or solution deposition and then cured under ultraviolet light.

The resulting photonic glass scatters red light randomly, while absorbing shorter wavelengths. Lee stresses that this is different from a traditional paint. “The reflected colour is determined by particle size, spacing, refractive-index contrast, and the degree of structural order, rather than by a molecular chromophore alone,” he says. When the researchers reduced the size of the nanoparticle shells, first to 180 nm and then to 160 nm, they found that they packed more closely together, producing first green and then deep blue colours.

The explanation for the blue scattering is more subtle than for the red: “The gold core is not needed to ‘make’ blue in the same way that a blue dye would,” explains Lee. “However, the gold core can still improve perceived colour purity by reducing broadband diffuse scattering and nonresonant background light.” explains Lee “Without this suppression, silica-only photonic glasses tend to look milky or whitish because many wavelengths are scattered together.”

Durable coatings

The researchers are now exploring several possible extensions of their research. They believe that the work could provide easily applied coatings that are durable as the light scattering comes from within the material structure rather from than a surface pigment.

They also believe it could have anti-counterfeiting properties: “In a normal ink or paint, its colour mainly originates from chemical pigments or dyes,” says Lee; “Our material produces a nanoscale structural signature: a specific reflectance spectrum, bandwidth, angular response, and microstructural arrangement determined by the particle diameter, core–shell geometry, refractive-index matching, volume fraction, and assembly pathway. This gives several possible authentication handles.”

Lee believes that it should be possible to reduce the cost of the material using a metal that is cheaper than gold. However, the precious metal is only 0.022% of the film by weight, so the technology may already be economically viable.

The film is described in Proceedings of the National Academy of Sciences.

“I think it’s really neat,” says materials scientist Aaswath Raman of the University of California, Los Angeles. “The concept of structural colour has been around for a really long time but to me it’s, like, the last steps before we see it out it the real world.”

He says the largest problems he foresees are the simple economics of competing with industrially-optimized paint industry – even if the technology is, in principle, superior. Nevertheless, he says, “of the technologies we see in research this is likely quite a good candidate for commercialization”. The next step, he says, is to actually find a “first use” application – he suspects the aerospace industry, which values ultralight, durable coatings, could be a candidate.

The post Photonic glasses deliver angle-independent structural colour, including reds appeared first on Physics World.

Physicists create mechanical memory device from slap-bracelet-like structures

Placing the structures on a variably spinning turntable creates a scalable way of addressing single bits of memory

The post Physicists create mechanical memory device from slap-bracelet-like structures appeared first on Physics World.

In today’s technologies, mechanical mechanisms generally provide the brawn while electronics supplies the brains. This is partly because it is challenging to write information into mechanical memories without resetting each bit individually. However, that could change as researchers led by Pedro Reis at École Polytechnique Fédérale de Lausanne in Switzerland and Martin van Hecke at AMOLF in the Netherlands have now found a practical means of writing mechanical bits. Their technique, which they describe in Science Advances, uses structures that resemble children’s slap bracelets placed on a rotating turntable. While they acknowledge it is unlikely to replace electronic memories, they argue that it could have specialist applications and might produce insights that translate into electronic innovations.

“The framework we propose could be very useful, for example, in the domain of physical intelligence, where you provide software with capabilities that don’t require essentially a brain or an electronic control system to do individual tasks,” Reis says.

Mechanics for memory

Reis and van Hecke’s interest in mechanical memory stems from their research on metamaterials, which are materials that are defined not just by their composition, but also by the structures within them. Mechanical systems offer a tangible means of getting to grips with the complex behaviour of these metamaterials. “Often, all sorts of things that we do rely on nonlinear responses,” van Hecke notes, adding that such responses are much easier to study in mechanical systems than in optical devices.

A metamaterial made up of an array of switchable mechanical elements could function as a form of mechanical memory. However, to be practical, it needs to be possible to flip the states of individual mechanical bits using global controls, as opposed to addressing them individually. Otherwise, writing data will be very fiddly.

A solution emerged from Reis’ interest in rotating platforms, which he describes as “a very versatile way of loading mechanical systems”. While the pair had been friends for more than two decades, they had been working independently until, during a visit, the penny dropped and they realized that placing the metamaterial array on a rotating platform could provide the control they needed.

Because the angular velocity of the platform sends its momentum outwards, each mechanical object experiences a force in the radial direction, known as the centrifugal force. If this angular velocity is not constant, the object will experience an additional force in the orthogonal azimuthal direction, known as the Euler force. “So you have a complex force and bi-directional field that is highly tuneable,” says Reis. “And this tuneability is what we realized is very powerful.”

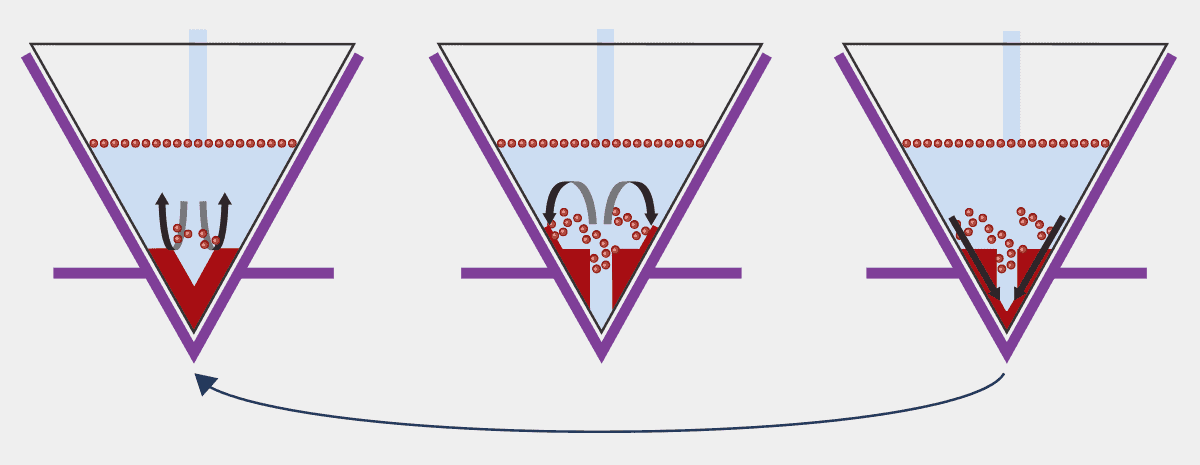

A rotating array

To construct their array, the researchers used clamped beams with two stable mechanical states – a little like a slap bracelet can be coiled up or flat, except these beams could either curve to the right or to the left. To individually address different beams, they ensured that each beam was unique in its width, the angle it was clamped at, and so on, all of which affect how much force is needed for a beam to ping into the opposite state. By tuning the parameters of each clamped beam and the angular acceleration of the rotating platform, they could engineer the applied force to switch (or not switch) specific beams, thereby writing data into the array purely by rotating the platform.

Doing this accurately requires a level of precision in acceleration control that surpasses what standard lab motors can achieve. However, the researchers say they were able to team up with a local company that had designed high-spec rotating platforms for its high throughput silicon chip production process. By programming platforms with five tailored clamped beams and the right rotation functions, they showed they could write the letters of the alphabet in ASCII script.

“This is a significant advance because it points toward future smart devices and robots that can be reprogrammed remotely without complex wiring or electronics, using only carefully designed motion‑based signals driven by a sole dynamic driving strategy,” explains Damiano Pasini of McGill University, Canada, who studies systems for mechanical computing but was not involved with this work directly.

Reis says he is excited about the scalability of the approach and its potential in high throughput experiments. Meanwhile, van Hecke is looking into how the idea might transfer to other systems, such as applying engineered force functions to crumpling sheets of complex glasses. “It just opens up possibilities for both studies, really fundamental studies of complex systems, but also real applications where you use this dynamic idea,” he tells Physics World.

The post Physicists create mechanical memory device from slap-bracelet-like structures appeared first on Physics World.

Inside the technologies powering tomorrow’s grids

Expert insights reveal why VSC converters and evolving semiconductor devices will dominate HVDC

The post Inside the technologies powering tomorrow’s grids appeared first on Physics World.

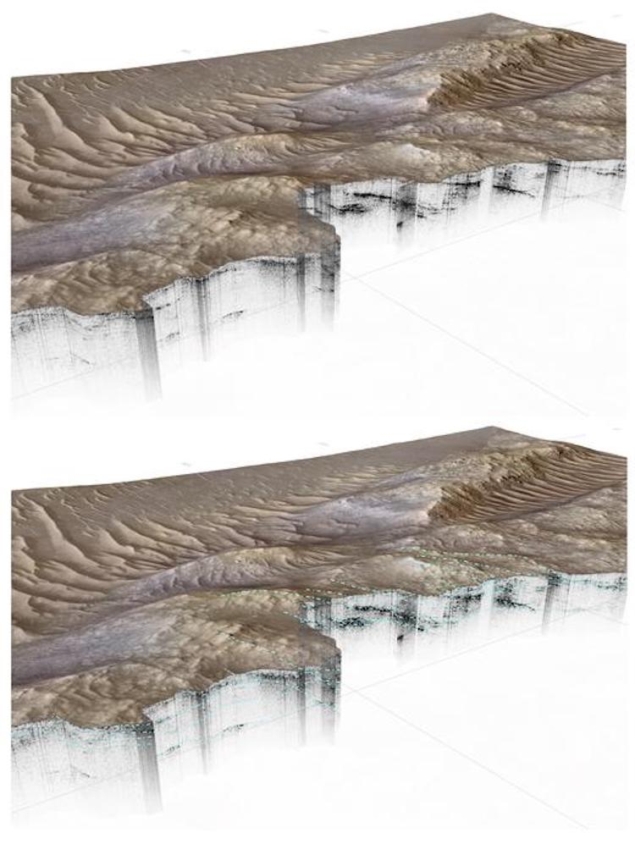

High‑voltage transmission systems are a key part of power grids, transporting electricity from where it is generated to where it is used. Electricity is moved at high voltage and low current to reduce losses and improve efficiency. These systems are essential for grid stability, integrating renewable energy, and enabling long‑distance power transfer. There are two main high‑voltage direct current (HVDC) technologies: line‑commutated converters (LCC) and voltage‑source converters (VSC). LCCs are an older technology that use high‑power semiconductor switches called thyristors and are suited to very large power transfers. VSCs are a newer technology that use insulated‑gate bipolar transistors (IGBTs), allowing faster control of power flow, better stability, and more compact converter stations.

In this study, the researchers interviewed thirteen leading experts to understand which HVDC technology is likely to dominate in the future, how semiconductor devices may evolve, and what cost or supply issues might arise. The experts agreed that thyristors used in LCCs are a mature technology with limited room for improvement, and that demand for LCC systems is declining in North America and Europe, though they will remain important in regions requiring very high‑capacity transmission such as China and India. In contrast, IGBTs used in VSC systems are expected to continue improving, particularly in reliability, packaging, and voltage capability, reflecting the growing use of VSCs in Europe and North America. Some experts even suggested that VSC converter stations may now be comparable in cost to, or cheaper than, LCC stations, and that further improvements in IGBT cost and performance could reduce VSC system costs further.

There was debate about whether silicon‑carbide (SiC) MOSFETs could eventually replace IGBTs in VSC systems. While SiC devices offer advantages in high‑frequency applications, they currently cannot handle the very high currents required for HVDC, and challenges remain in packaging and long‑term reliability. Experts also noted that although global demand for power electronics is rising, this is unlikely to constrain HVDC development; instead, shortages of other components, particularly high‑voltage transformers, may pose greater risks. Overall, this research clarifies which power‑electronic technologies are poised to shape the next generation of HVDC systems and highlights why future grids are expected to rely increasingly on VSC converters and advanced semiconductor devices.

Read the full article

Expert views of power electronics in the future high voltage power system

Spyridon Pavlidis et al 2026 Prog. Energy 8 015003

Do you want to learn more about this topic?

Application of reinforcement learning in planning and operation of new power system towards carbon peaking and neutrality Fangyuan Sun et al. (2023)

The post Inside the technologies powering tomorrow’s grids appeared first on Physics World.

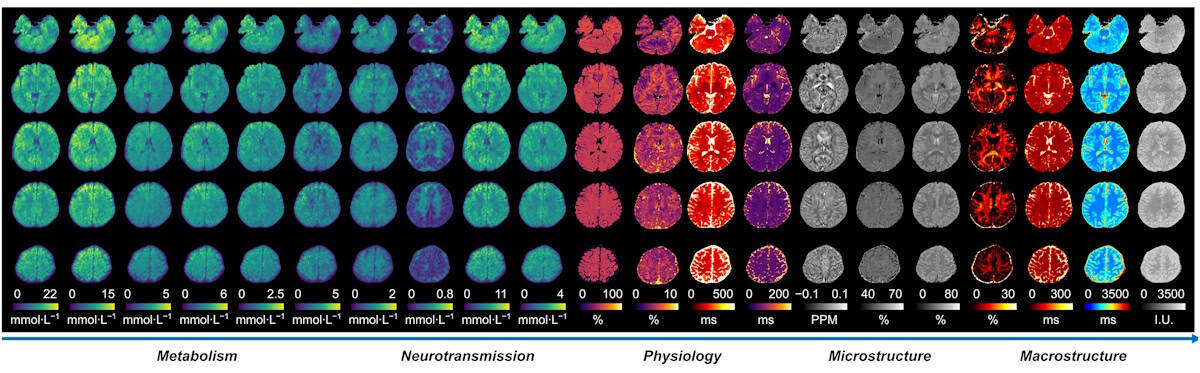

From equations to nuclear medicine: Keamogetswe Ramonaheng on building medical physics in Africa

Keamogetswe Ramonaheng discusses her life in medical physics

The post From equations to nuclear medicine: Keamogetswe Ramonaheng on building medical physics in Africa appeared first on Physics World.

For Keamogetswe Ramonaheng, physics was never just about equations – it was about clarity. “From a young age, I was attracted to mathematics and science as a way of understanding complex phenomena through a structured approach,” she says. “Physics was the area that spoke to me the most because it is the foundation for the fundamental principles that govern the natural world.”

Ramonaheng is head of medical physics and radiobiology at the Nuclear Medicine Research Infrastructure (NuMeRI) in Pretoria, South Africa, where she applies the principles of radiation science to treat cancer. NuMeRI, which opened in 2024, is the first research facility in Africa dedicated to nuclear medicine. It’s a joint venture between the Steve Biko Academic Hospital, the University of Pretoria, iThemba Laboratories for Accelerator-Based Sciences and the Nuclear Energy Corporation of South Africa.

Ramonaheng’s academic journey began at the University of the Free State (UFS), where she completed her undergraduate and honours studies before starting an internship at Universitas Academic Hospital in Bloemfontein. There she saw how a rigorous physics training can lead to tangible, clinical benefits. “The ability to comprehend and harness the interaction between radiation and matter in the human body demonstrated the power and relevance of scientific inquiry,” she recalls.

In many ways, nuclear medicine found me

Keamogetswe Ramonaheng

Thanks to a fellowship from the International Atomic Energy Agency (IAEA), Ramonaheng completed a clinical placement at Royal North Shore Hospital in Sydney, Australia. She later continued her postgraduate studies at UFS, becoming the first Black South African woman to earn a PhD in medical physics for nuclear medicine. “In many ways, nuclear medicine found me,” says Ramonaheng, who is grateful to the encouragement of various senior staff members who saw her potential and guided her into the field.

Multifaceted role

Following a spell as an independent medical physicist and manager at Universitas Academic Hospital and lecturer at UFS, Ramonaheng joined NuMeRI in 2024 and the University of Pretoria. Along with the team of scientists she leads, Ramonaheng oversees the safe and effective use of ionizing radiation at NuMeRI used to treat and diagnose disease in a safe and effective manner.

It’s a varied role, which stretches from providing patient-focused clinical services to carrying out applied research. “We integrate research with operations,” says Ramonaheng. “That requires careful planning and rigorous quality assurance, ensuring that innovation does not compromise safety.”

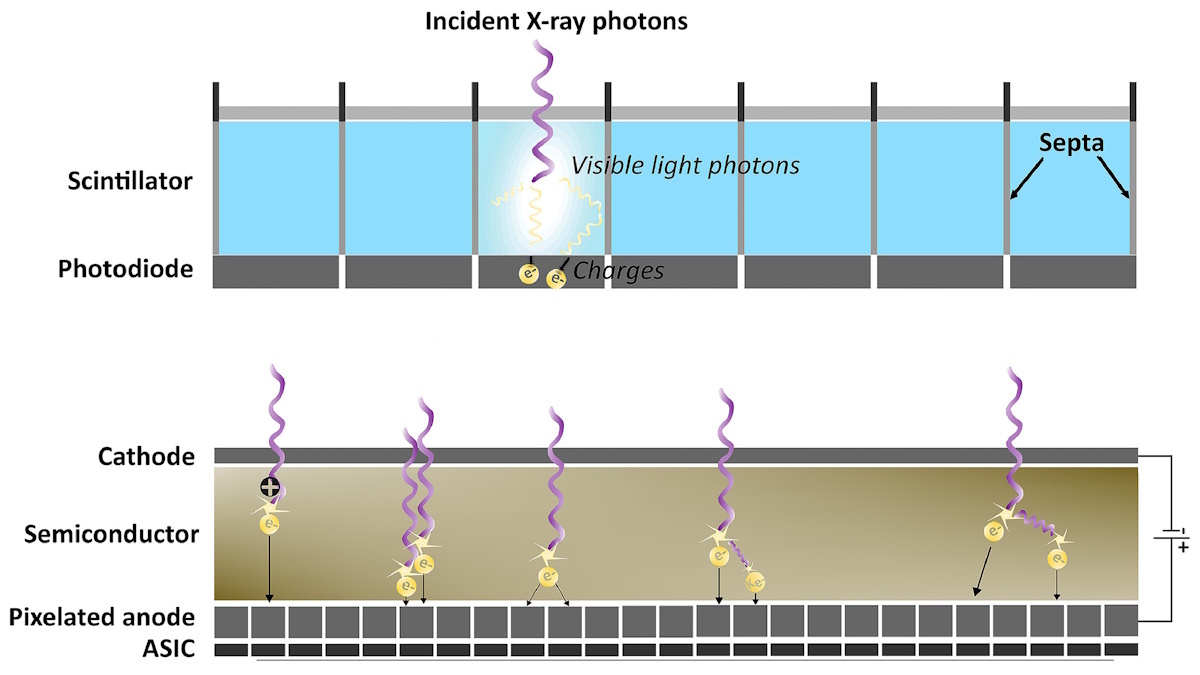

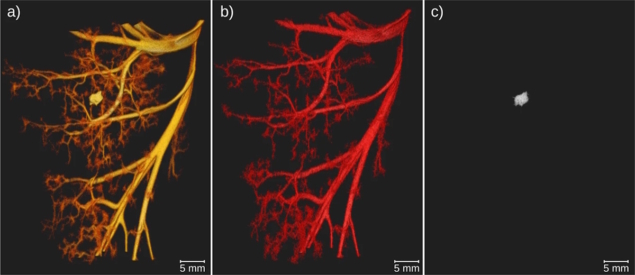

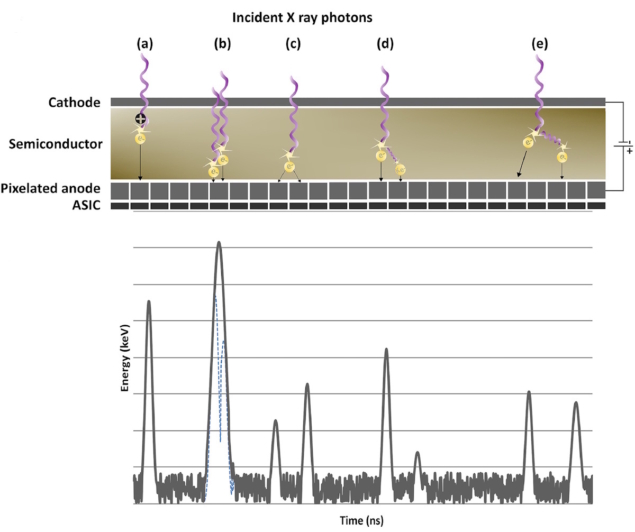

Among her duties, Ramonaheng carries out dosimetry calculations for innovative radiopharmaceuticals, works on new forms of quantitative imaging, and helps to develop novel radionuclide therapies, including using alpha particles to treat cancer. She also uses gamma-ray cameras equipped with highly sensitive cadmium-zinc-telluride detectors, which allow radiopharmaceuticals to be quantified and imaged more precisely.

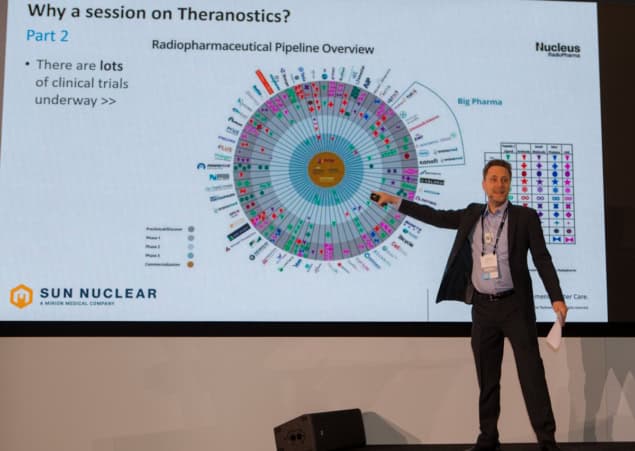

Ramonaheng is particularly interested in “theranostics” – a form of “precision medicine” that combines therapy with diagnostics. It involves giving a patient a tumour-targeting molecule labelled with a radionuclide. This allows the tumour to be visualized using techniques such as positron emission tomography (PET) or single-photon emission computerized tomography (SPECT). The same molecule – or one similar to it – is then used to deliver a therapeutic radionuclide directly to the tumour.

Daily challenges

For Ramonaheng, a typical day is fast-paced. Mornings often begin with her overseeing radiation-safety protocols and ensuring that radiation imaging and counting equipment are working as well as possible, such that they meet quality assurance standards. Through the day, Ramonaheng also oversees all operational medical-physics activities and carries out her duties as chair of NuMeRI’s radiation protection committee.

As the day progresses, she might find herself reviewing clinical theranostics dosimetry workflows to carrying out patient-specific dose calculations or evaluating quantitative imaging metrics from SPECT/CT and PET/CT systems. Other tasks include reviewing research protocols for cancer theranostics, mentoring postgraduate students at the University of Pretoria, and examining clinical trials.

Innovation accelerates when silos are dismantled

Keamogetswe Ramonaheng

Ramonaheng works in a highly interdisciplinary environment, collaborating with radiographers, nurses, radiochemists, radiopharmacists, medical physicists and clinicians to address live issues in real time. “Innovation accelerates when silos are dismantled,” she says.

The work is not without its challenges. Funding for postgraduate training is a persistent concern. Clinical physics is also a highly specialized field, which means it can be hard to recruit people with the right skills, who might be drawn to better-paid industry jobs. In addition, NuMeRI is an operationally complex mix of advanced imaging systems, radiopharmaceuticals and clinical regulations, which requires good project-management and planning skills.

But Ramonaheng, who recently won two awards at the 8th Theranostics World Congress in Cape Town, feels the benefits outweigh the challenges. “It is very fulfilling to see the translation of research into clinical application,” she says. Just as gratifying, she adds, is watching her students move from their studies to publications and clinical applications. “You see the entire process of scientific advancement.”

A more promising future

Looking ahead, Ramonaheng envisages a growing use of artificial intelligence (AI) in her work. She also collaborates with national and international partners to automate workflows and enhance efficiency, precision and patient-centred care. Another ambition for Ramonaheng is to further strengthen NuMeRI as an Africa-wide hub for research, clinical service and training – a vision reinforced by the IAEA recently naming NuMeRI as one of 18 global “anchor centres” for its work in radiotherapy and medical imaging.

Ramonaheng believes medical physics will grow rapidly in Africa over the next 10 years, fuelled by an expansion of theranostics and precision medicine. Her hope is to guide this growth through mentorship and leadership, ensuring that Africa develops its own talent pool of medical physicists who can address the continent’s unique healthcare needs.

Africa suffers, for example, from limited access to advanced imaging and targeted therapies. Ramonaheng’s aim is to optimize personalized and precision medicine for cancer patients, ultimately improving treatment outcomes and quality of life. Eventually, she hopes, medical physics will be recognized as a profession across the continent. “We are building not only research outputs but human capital.”

Leadership is not only about the creation of paths, but the creation of paths where there were no paths previously.

Keamogetswe Ramonaheng

Being a pioneer in the field has required resilience on her part. “Competence must be coupled with confidence,” says Ramonaheng, who has had to learn the unwritten rules of a world dominated by men. As a mentor, her guiding principle is the African concept of motho ke motho ka batho babang – a person is a person only through others. “Leadership is not only about the creation of paths,” she says, “but the creation of paths where there were no paths previously.”

Her message to young physicists – particularly women and those from other underrepresented groups – is clear. “Medical physics is a dynamic and impactful field at the intersection of physics, medicine and technology,” she says. “ It allows you to see the direct translation of science to patients.” Medical physics requires resilience, curiosity and commitment, but for Ramonaheng its beauty is that equations don’t stay on paper – they become a tool for healing.

The post From equations to nuclear medicine: Keamogetswe Ramonaheng on building medical physics in Africa appeared first on Physics World.

Institute of Physics president Paul Howarth outlines his vision for physics

Paul Howarth is set to be IOP president until 2029

The post Institute of Physics president Paul Howarth outlines his vision for physics appeared first on Physics World.

With a PhD in nuclear physics, Paul Howarth has had a long career in the nuclear sector, working on the European Fusion Programme and at British Nuclear Fuels, as well as co-founding the Dalton Nuclear Institute at the University of Manchester. He was a non-executive board director of the National Physical Laboratory and served as chief executive officer of the National Nuclear Laboratory.

Howarth became president-elect of the Institute of Physics (IOP) in September 2025. In February he became IOP president after space physicist Michele Dougherty stepped aside from the role to avoid any conflicts of interest given her position as executive chair of the Science and Technology Facilities Council. Howarth is set to be IOP president until 2029. Physics World recently caught up with Howarth to find out more about his career and vision for physics.

What originally sparked your interest in physics?

I think it probably came from my father. He was a research chemist. We lived in Cheshire near the Jodrell Bank Observatory and its iconic Lovell Telescope. I was fascinated by that and it captivated my interest in astronomy and so I did a degree in physics and astrophysics at the University of Birmingham.

You stayed at Birmingham to do a PhD in nuclear fusion. What attracted you to that field?

It goes back to my interest in astronomy and the ability to use mathematics to describe the universe. Yet by the end of the degree, I was fascinated by nuclear fusion as an energy source and a sustainable means of clean energy for society. During my PhD, I got to work on the JET tokamak in Oxfordshire, which was wonderful. It was when JET was doing its first deuterium-tritium plasma shot, which was an exciting time.

After your PhD, you worked for British Nuclear Fuels. Why did you make that move and what appealed about the commercial side of physics?

In the 1990s there was quite a bit of uncertainty about the direction of nuclear fusion, but I’d always been fascinated by the huge monolith structures of nuclear power stations. So I didn’t hesitate when an opportunity arose to work at Sellafield – a huge site in north-west England with more than 200 nuclear facilities – on understanding the physics of plutonium.

You then served as chief executive officer of the UK’s National Nuclear Laboratory. How did that come about?

At British Nuclear Fuels I was working to build the case for the next generation of nuclear power plants. But in the early 2000s it was less clear that nuclear was going to be part of the UK’s energy policy. So British Nuclear Fuels was broken up into organizations such as the Nuclear Decommissioning Authority. But I was determined to continue to make the case for new nuclear build and ended up helping the UK government create a National Nuclear Laboratory to maintain sovereign nuclear capability, becoming chief executive officer in 2011.

What did that role involve?

We had contracts to support all aspects of the UK’s nuclear programme as well as build the case for future nuclear. We worked on the front end of the fuel cycle, on reactor technology, on future reactors, on legacy waste management and decommissioning. I had the responsibility for running about £2–3bn of critical nuclear real estate and infrastructure.

Many countries, not just the UK, are showing a renewed enthusiasm for nuclear – what do you attribute that to?

Yes, it’s a fascinating time for nuclear. I think things are heading now towards small modular reactors and advanced reactor systems. Larger nuclear plants are more efficient but it is possible to trade that off for smaller plants. This opens up the opportunity for others to potentially invest in nuclear. So we see, for example, individuals like Bill Gates and others who are looking at nuclear power.

That’s the challenge – to effectively support all aspects of physics. I don’t want to be in a position where we are pitching one area against another

Paul Howarth

Do you see parallels with the fusion industry and how that has grown in the past decade?

Absolutely. I think a very similar thing has happened. Of course, there’s still the engineering challenges associated with scaling up fusion but good progress is being made. And other players and entities, like Tokamak Energy and First Light Fusion, are looking at entering the market, which is great.

Having retired from the NNL in 2025, what drew you to the role of IOP president?

It was the opportunity to give something back to physics. Physics is such an important discipline that is needed across all aspects of society and through my time working in physics, I’ve seen the benefits that it brings.

What things excite you as you take up this position?

When we look across society, the impact that physics is having is massive – whether that is in data centres, artificial intelligence, net zero, medicine or even food supplies. One of the things I would like to achieve during my presidency is to qualify and quantify that impact. The role that physics can play is going to be fascinating and to be part of that journey is exciting.

What are your priorities as president?

One is to nudge the dial on getting physics recognized in society as a really valuable and important discipline. This includes making sure that schools are properly equipped and resourced for teaching physics as well as having more teachers with a physics background. This would then hopefully translate into more people studying the subject at A-level and degree level.

UK Research and Innovation (UKRI) recently announced funding changes that will see cuts to particle and nuclear physics. How do you see that impacting physics?

Yes, it’s a challenging time at the moment. We’ve been working hard to ensure that the impact is properly assessed and that we are doing what we can to champion and support some of these critical disciplines in physics. I can understand the direction of travel from UKRI, which is the importance that the investment underpins and supports economic growth. And there are some key critical disciplines such as quantum computing, autonomous system robotics and fusion that continue to be supported and where funding has actually increased. But what we are concerned about is the potential adverse or detrimental effects of a reprioritization that may move funding away from some critical areas in physics, such as particle physics, astronomy and nuclear physics. That is a concern because they are fundamentally important disciplines.

Could there be an impact on people wanting to go into these areas?

What I worry about is the negative impact on university physics departments that work in those areas. It’s also those areas of physics that really captivate people to study the subject. But there is a knock-on effect on other areas too because many people who study physics go into engineering, which is crucial for other industry sectors – whether it’s around detectors, data systems, data acquisition, electronics, power systems, automotive, aerospace, defence or nuclear energy. So I worry that the reprioritization is not properly assessing the impact and the benefit the subjects have.

How is the IOP tackling this issue?

We need to ensure that we fight the case for those areas of physics, because they are so important. We need to find a path that ensures we maintain these critical areas but also ensure that investment is being made to support economic growth as a whole.

How do you strike that balance between being vocal about the cuts, but also needing to support emerging areas of physics?

I think that’s the challenge – to effectively support all aspects of physics. I don’t want to be in a position where we are pitching one area against another. It’s the totality of the capability, and that’s all aspects of physics and the interrelationship between those disciplines too. We should celebrate where there is growth in new and exciting areas. But equally, we must protect those areas that are fundamental pillars of physics.

Are there any opportunities even in this difficult situation?

As we continue to engage government and other stakeholders on these funding changes, there is an opportunity to define physics’ impact as a benefit to society as well as big opportunities for science-driven growth arising from increased investment in key areas. I believe that a developed nation like the UK, which has a very good international standing, should continue to invest in all aspects of the discipline.

What other challenges lie ahead?

It is really important that we remain an inclusive discipline and we also need to get our heads around the impact of AI on physics. The IOP has already done some work with the community in this area with the Physics and AI Impact Pathfinder report, which highlighted the role of physics as both enabler and beneficiary of AI, and also explored the discipline-specific views physicists hold regarding AI in science and society. I am interested in us understanding more about what AI means for physics and being a physicist, how we embed AI in the training of physicists so physicists can use it and become better physicists. I would be keen for the IOP to carry out more work to understand the impact it’s clearly going to have.

How do you see the subject evolving over the coming decade?

I think that society is embracing what science and technology, and in particular physics, can do. We need to help ensure that the next generation of physicists are being appropriately trained to become good physicists. In fundamental physics, there are some fascinating things developing like bringing together cosmology and quantum physics, understanding quantum gravity, the nature of time and what’s happening down at the particle physics level. It feels as if something’s coming together. I’d love to be around when physics can finally pull all of that together and go “we’ve got it – the light bulb’s gone on”.

- You can listen to Paul Howarth in conversation with Michael Banks on the 14 May 2026 episode of the Physics World Weekly podcast

The post Institute of Physics president Paul Howarth outlines his vision for physics appeared first on Physics World.

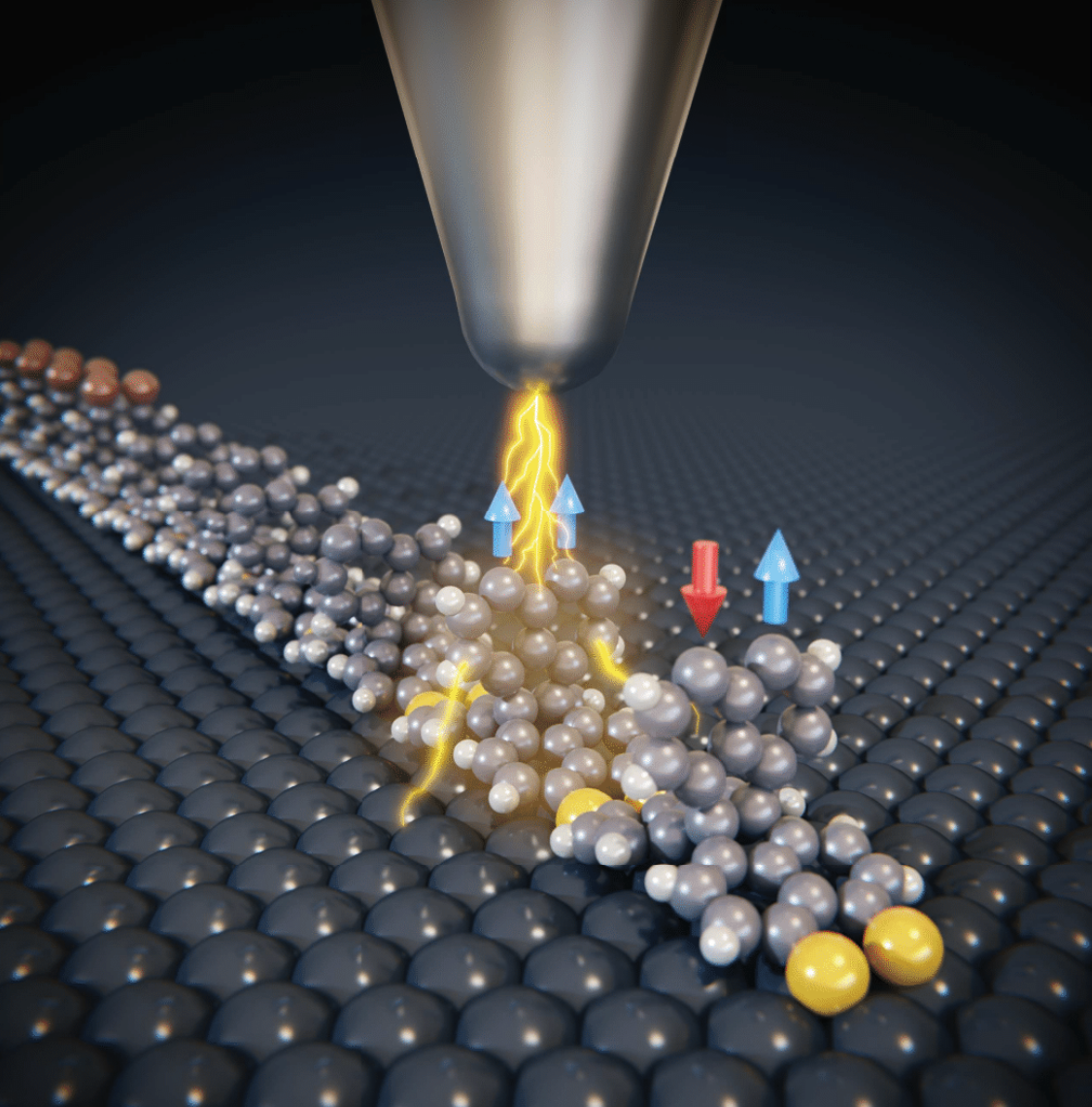

Molecular spin sensor takes the temperature of cancer cells

New class of biocompatible quantum nanosensor unveiled in Japan

The post Molecular spin sensor takes the temperature of cancer cells appeared first on Physics World.

Researchers in Japan have succeeded in measuring the temperature inside living cells with high precision using a new class of biocompatible quantum nanosensor – something that has been difficult to do until now even. If improved, the nanosensor could be used to characterize a wide range of biological phenomena and so help in disease diagnosis, they say.

Recent years have seen the advent of a new generation of nanoscale quantum sensors that can detect the tiny magnetic fields of biological systems. Some of these sensors rely on photons and others on electrons or spin defects – typically diamond specially engineered with nitrogen–vacancy (NV) defects. This material is made by removing two carbon atoms from the diamond lattice and replacing one with a nitrogen atom. The other “hole” is left empty, thereby creating a vacancy or defect. The spin state of the defect is influenced by the local magnetic field that can be “read out” from the way it fluoresces.

While a powerful tool, and biocompatible, this type of quantum sensor does suffer from certain limits. For one, it can be structurally inhomogeneous, which affects how it detects temperature and other physical or chemical parameters inside biological cells.

A more homogenous structure

Even though the new molecular quantum nanosensor (MoQN) works in the same way as these conventional devices, it does not suffer from this problem, explain Nobuhiro Yanai of the University of Tokyo and Hitoshi Ishiwata of the National Institutes for Quantum Science and Technology (QST), who led this research effort. This is because it has a more homogenous structure and does not contain any defects. Instead, it is made by embedding molecular spin qubits, in this case fabricated from pentacene, in nanocrystals of para-terphenyl. This design makes the structure uniform on a molecular scale and preserves the quantum coherence of the spin qubits. It is then coated with Pluronic F127, which is a biocompatible surfactant.

By detecting the spin direction of the “excited triplet state” of the pentacene qubits using a technique known as optically detected magnetic resonance (OMDR), the researchers can precisely determine the temperature of the qubits’ surroundings from the OMDR peak position. When they tested their method inside the cytoplasm of cancer cells in vivo, they found that the intracellular temperature was consistently higher than the surrounding medium.

Yanai says he embarked on this study after reading about the work of Sam Bayliss’ group at the UK’s University of Glasgow, and Ashok Ajoy’s group at the University of California, Berkeley in the US on OMDR in pentacene-doped para-terphenyl crystals. He says he immediately got the idea that nanocrystals of this material could be used for quantum sensing inside cells. This was because his group had already developed such nanocrystals for a different purpose in previous research.

Ensuring biocompatibility

“I then spoke with Hitoshi Ishiwata, who is an expert in quantum sensing using NV centres,” he recalls. “While many molecular qubits have been developed to date, there had been no examples demonstrating their sensing ability within living cells.”

The project required materials science expertise, he tells Physics World, and in particular, finding out how to reduce the material to the nanoscale and ensuring it was biocompatible.

“We already knew that nanodiamonds are good quantum sensors for temperature measurements, but I had noticed a practical limitation: their ODMR spectra often vary significantly from particle to particle,” he says. “This spectral dispersion can introduce errors, especially when trying to perform precise measurements at the single-particle level.”

Replacing hydrogen with deuterium

The researchers thought they had overcome this problem during the first run of their experiments because they found that different particles showed identical OMDR spectra. However, their joy quickly waned when they observed that the spectra were still broadened by hyperfine interactions between the pentacene-doped para-terphenyl molecules’ electron spins and hydrogen nuclear spins.

To improve the spectral resolution, Ishiwata says he suggested chemically modifying the molecule by replacing the hydrogen in it with deuterium. And the technique worked: “the hyperfine broadening was strongly suppressed, allowing us to determine the OMDR spectra much more precisely.”

These findings, which are detailed in Science Advances, show that MoQNs are a chemically versatile platform for quantum sensing in living cells and that they can operate directly inside them while maintaining the precision needed for absolute thermometry, he says. Their appeal also lies in in the fact that their structures can be easily modified.

It will not all be plain sailing, however, adds Yanai. MoQNs cannot yet target specific organelles within cells, so endowing them with this targeting capability is an important future challenge. “What is more, their size has been limited to around 200 nm so far, so creating smaller MoQN particles will be crucial,” he says.

The post Molecular spin sensor takes the temperature of cancer cells appeared first on Physics World.

Quiz of the week: CERN may have made a quark–gluon plasma by colliding which nuclei?

Have you been keeping up to date with physics news? Try our short quiz to find out

The post Quiz of the week: CERN may have made a quark–gluon plasma by colliding which nuclei? appeared first on Physics World.

Fancy some more? Check out our puzzles page.

The post Quiz of the week: CERN may have made a quark–gluon plasma by colliding which nuclei? appeared first on Physics World.

AI-led solutions of Erdős problems spark debate over the future of mathematics

Problems that defeated human mathematicians for decades are now solved, but for whose benefit?

The post AI-led solutions of Erdős problems spark debate over the future of mathematics appeared first on Physics World.

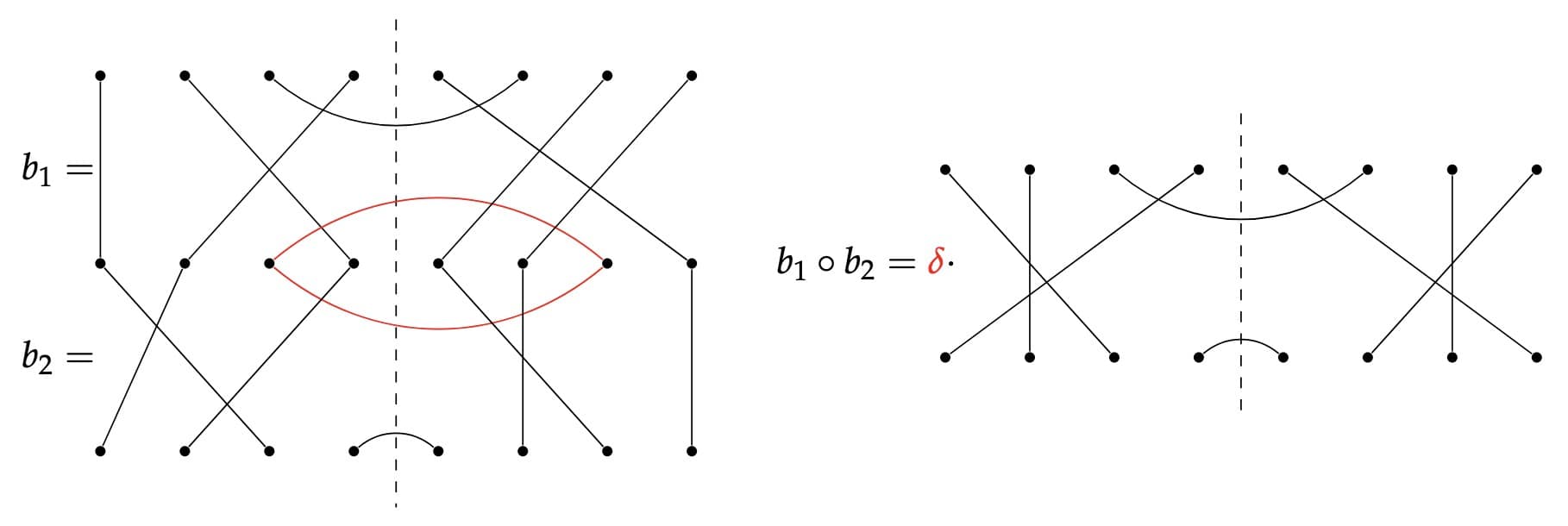

News that large language models (LLM) have made major advances in solving Erdős problems – a set of problems formulated by the renowned 20th-century mathematician Paul Erdős – has created an amalgamation of uproar and interest among mathematicians. The past month alone has seen two significant LLM-generated solutions. The first relates to prime sets, a generalization of prime numbers, and was solved after Liam Price, an amateur mathematician from the US, fed the problem statement into GPT-5.4 Pro without other information. The second came last week when the company behind ChatGPT, OpenAI, announced that it had used artificial intelligence to disprove Erdős’ planar unit distance conjecture.

LLMs have solved Erdős problems before, but the one Price chose wasn’t just any Erdős problem. It was one that human mathematicians had worked on for 60 years without success. The nature of the solution was also unusual. While previous LLM solutions to Erdős problems used standard techniques, this one took an entirely different approach. Rather than starting from Erdős’ original probability-theory-based framing of the problem, as human mathematicians had, the LLM found an alternative route – one that led naturally, in less than a page, to a correct proof.

“Paul Erdős had a concept of ‘Proofs from The Book’, meaning that the argument is so compact and elegant that this is the proof God would’ve written down in ‘The Book’,” Jared Lichtman, a mathematician at Stanford University in the US, wrote on the social media site X after the proof was announced. “After reading the GPT5.4 proof of Erdős #1196, I would say this is a Book Proof of the result.”

The planar unit distance conjecture, meanwhile, concerns a deceptively simple question: if you have n points in a plane, how many pairs of points can be exactly one distance unit apart? Erdős thought the limit was n1+C/log log(n) where C is a positive constant, but OpenAI’s model identified a higher bound. What’s more, the company claims it did so not by rehashing prior work, but by “bring[ing] unexpected, sophisticated ideas from algebraic number theory to bear on an elementary geometric question”.

Some members of the mathematics community have greeted these proofs, and the advent of AI in mathematics in general, with enthusiasm. OpenAI’s announcement quotes Arul Shankar, a number theorist at the University of Toronto, Canada, as saying that the new proof “demonstrates that current AI models go beyond just helpers to human mathematicians – they are capable of having original ingenious ideas, and then carrying them out to fruition”.

Others, however, are more cautious. David Bessis, a mathematician-turned-science writer who previously worked on algebra, geometry and topology, claims that even such apparent successes stem from a misconception of mathematics as a logically direct process of churning out theorems, given some rules. Writing in his Substack newsletter, Bessis argues that the method used to verify AI-generated proofs, which involves a computer program called Lean, may reduce the benefit the mathematics community gains from proofs. Notably, proofs that are verifiable in Lean are not always parse-able by humans, which detracts from (and in certain cases removes) the insights researchers typically get from new proofs.

How AI is being used in mathematics…

To evaluate the merits of these arguments, it’s useful to understand how AI is currently used within mathematics research. The first strategy is the one Price used to solve Erdős #1196: directly prompting an LLM. “Large language models have proven their worth at literature search: finding similar instances of a problem, or a proof, in past literature,” notes François Charton, an AI engineer at the California-based start-up AxiomMath, which is using AI to accelerate mathematics research.

The second strategy is to use AI models trained on other types of data. According to Charton, these models are especially good at spotting “weak signals and correlations” and thereby uncovering patterns in data that might be too laborious or convoluted for humans to identify.

Both methods have shown promise for generating new results, but they are not universal – at least, not yet. “It [AI] seems to do a lot better at certain types of maths than others,” says Thomas Bloom, a mathematician at the University of Manchester, UK, who maintains a webpage that tracks solutions to Erdős problems. In particular, Bloom says that to the best of his knowledge, AI “hasn’t done anything interesting in category theory” – a field whose reputation for abstraction is only matched by its track record of bridging supposedly distinct areas of mathematics.

Another challenge is that with AI systems churning out new proofs at scale, there are simply not enough people with the skills needed to check them. A process called autoformalization could solve this problem by turning human proofs into what Bessis calls “bulletproof, machine-verifiable logical derivations” expressed in Lean or other specialized languages. At that point, AI-generated proofs could be checked automatically. The question is, what knowledge will humans gain in the process?

For doubters like Bessis, who refers to autoformalization (at least as practiced by certain firms) as “AI slop”, the answer is very little. But within the broader mathematics community, there is considerable interest in autoformalization, if done correctly. “I see autoformalization as the bridge in both directions, as important as proving itself,” Charton argues. “We can use Lean to translate between these two languages so that a Lean proof can be reverse-translated into a sketch, lemmas or natural language a human mathematician can engage with. That bidirectional translation preserves and extends mathematical knowledge at scale.”

…and how it isn’t

In the 18th century, when Leonhard Euler began arranging the logical thought processes of mathematics into theorems, definitions and proofs, mathematicians were primarily interested in solving problems with underpinnings in the physical world: questions of volume and distance, and, more generally, geometry and counting. Since then, though, mathematics has become a discipline that is at least as concerned with coming up with interesting problems as it is with solving them.

Two aspects of this change seem relevant to debates over AI’s utility. The first is that posing problems requires a broader skillset than solving them. The second is that solving posed problems sometimes requires mathematicians to invent new structures, tools or objects. Fermat’s Last Theorem, which posits that there are no three positive integers a, b, and c that satisfy the equation an + bn = cn for any integer value of n greater than 2, is a good example. At face value, this nearly 400-year-old theorem seems simple. However, proving it was the life’s work of a modern mathematician, Andrew Wiles, who won the Abel Prize in 2016 for developing the numerous new tools required, as well as for the proof itself.

Coming up with such tools – or indeed whole new frameworks – is a challenging and hugely creative endeavour. There are no rules as to the kinds of objects you are allowed to create, and unlike a proof (which is either correct or incorrect), there is no finality, either. If the new framework is a good one, it will crop up frequently and naturally in various branches of mathematics, and other mathematicians will incorporate it into their own work. If it isn’t, they won’t.

Currently, not even AI enthusiasts like Charton think machines are capable of such leaps. “Theory building is completely out of reach right now,” he tells Physics World. “Models, especially generative models, can provide a mathematician with interesting examples, or discover surprising relations that may bring a theoretical breakthrough, but the breakthrough still depends on the mathematician. I believe this will remain the case for some time.”

A new tool for scientists and mathematicians alike

In many areas of science, AI works in a way that is entirely distinct from human thinking. In physics, for example, machine learning algorithms are trained to analyse large amounts of data, find patterns and use them to infer underlying laws. This strategy could advance our understanding of some of the most fundamental questions in physics, but it is very different from how a human scientist would do it, and therefore perhaps more likely to be seen as a welcome new tool.

On the theorem-proving side of mathematics, the distinction between methods a human might use and those an algorithm might use is more blurred. Yet in some ways, Bloom thinks incorporating AI into mathematics could bring the field closer to other sciences. In particle physics, for example, “you don’t go in and take these individual recordings [of data]. It’s all automated,” he tells Physics World. “Until now, there has been no equivalent for maths. It takes time and attention to prove theorems, and maybe this had been a bottleneck.”

AxiomMath’s Charton agrees. “Every new math tool in history has automated something that used to be the work of a human mathematician – from the abacus all the way to symbolic algebra,” he says. “With each new tool, the role of the mathematician evolved rather than disappeared. Tasks got automated, and problems that felt impossible became trivial – but mathematicians just keep moving up the stack to the next set of questions. I see AI as the latest shift rather than a categorical break from history.”

The post AI-led solutions of Erdős problems spark debate over the future of mathematics appeared first on Physics World.

Open data: the benefits and challenges of sharing a precious resource

Laura Feetham-Walker of IOP Publishing is our podcast guest

The post Open data: the benefits and challenges of sharing a precious resource appeared first on Physics World.

Data are at the core of science, but traditional journal articles normally deliver a distillation of the raw data gathered by the authors. While the movement towards open access to data is widely supported by researchers and funding agencies, a 2024 study by IOP Publishing revealed that many scientists still encounter a wide range of practical, ethical and technical barriers when it comes to sharing their data.

As a result, the publisher has launched a free online course that aims to give early-career researchers the practical skills and confidence they need to share and manage research data effectively.

To talk about the course and IOP Publishing’s open data policy I am joined by Laura Feetham-Walker, who is head of publishing strategy and performance at IOP Publishing.

IOP Publishing is a wholly owned subsidiary of the Institute of Physics and it publishes Physics World.

- You can register for the free course here: “Open Data Excellence“

The post Open data: the benefits and challenges of sharing a precious resource appeared first on Physics World.

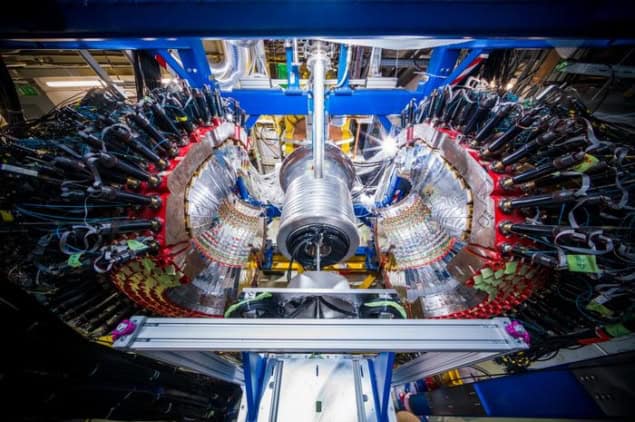

Physicist John Hill takes the helm at Brookhaven National Laboratory

Hill will manage the lab’s $900m annual budget

The post Physicist John Hill takes the helm at Brookhaven National Laboratory appeared first on Physics World.

John Hill has become director of the Brookhaven National Laboratory in Long Island, New York, after serving as interim lab director since September. Hill will now oversee Brookhaven’s 3000-strong team of scientists, engineers and technicians as well as manage the lab’s annual $900m budget.

Brookhaven opened in 1947 as one of the first three US national labs, the others being Argonne and Oak Ridge. Brookhaven carries out a wide range of research in the physical, biomedical and environmental sciences and is home to seven Nobel-prize-winning discoveries.

Brookhaven operated the Relativistic Heavy Ion Collider (RHIC) until it shut down in February. RHIC collided heavy nuclei such as gold and copper to produce a quark-gluon plasma – a state of matter thought to have been present in the very early universe.

In 2020, Brookhaven was chosen to host the next-generation Electron-Ion Collider (EIC). Costing about $2bn, the EIC will smash together electrons and protons to probe the strong nuclear force and the role of gluons in nucleons and nuclei.

Building the EIC involves revamping the RHIC accelerator as well as adding an electron ring and other components with the first experiments starting the 2030s.

As well as RHIC and the EIC, Brookhaven is also home to other big-science projects including the National Synchrotron Light Source II, which opened in 2015 at a cost of $912m.

A Brookhaven career

With a PhD in physics from the Massachusetts Institute of Technology, Hill joined Brookhaven as a postdoc in 1992 before leading the lab’s X-ray scattering group from 2001 to 2013.

He then became deputy associate laboratory director for energy and photon sciences until becoming the lab’s deputy director for science and technology from 2023 to 2025.

In September 2025 he became interim director following the resignation of the theoretical physicist JoAnne Hewitt.

In the role, Hill will also become president of Brookhaven Science Associates – a partnership between Stony Brook University and the science and tech firm Battelle – that manage and operate Brookhaven on behalf of the US Department of Energy.

Hill notes that he is “very excited” to lead the lab in the coming years. “Brookhaven is entering a defining decade, and I’m honoured to take on this role at this time,” he says. “The vision we have for our future is a powerful one, including delivering the nation’s next particle collider and advancing science across a range of critical areas.”

The post Physicist John Hill takes the helm at Brookhaven National Laboratory appeared first on Physics World.

Radar identifies insect species via reflections from wingbeats

A new technique that combines millimetre-wave radar with machine learning holds promise for scalable and sustainable monitoring of insect biodiversity

The post Radar identifies insect species via reflections from wingbeats appeared first on Physics World.

Pollinating insects form a vital part of any ecosystem, enabling the biodiversity that we see on Earth today. However, biodiversity is in rapid decline around the world, and monitoring insect species is a difficult task that often requires some insects to be killed. To support the conservation of biodiversity, which is critical to ensure the sustainability of human civilization, more robust monitoring is required. In a study published in PNAS Nexus, researchers have developed a new method to identify and classify individual insects, based on radar imaging and machine learning.

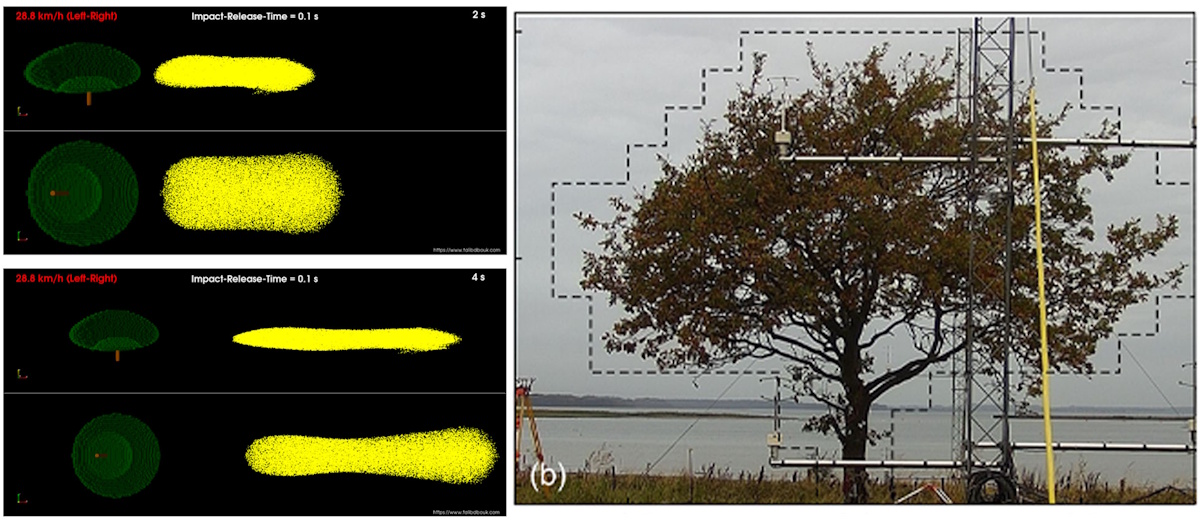

Radar has long been used to study migrating insects that fly at high altitudes and in large numbers, but such systems typically perform wide-area, long-range monitoring. However, thanks to a combination of millimetre-wave radar and machine learning, narrow focused identification is now possible, by detecting changes in the radar reflection of insects caused by the flapping of their wings.

“Having a background in antenna engineering, there was always the question of whether this technology can be used to address some of the environmental challenges that we’re facing,” says co-lead author Adam Narbudowicz from the Technical University of Denmark. “Some five or six years ago, we started talking with [co-author] Ian [Donohue] about those possibilities, and eventually the idea of micro-Doppler emerged, which seemed feasible from an engineering point of view and could provide some useful data on biodiversity.”

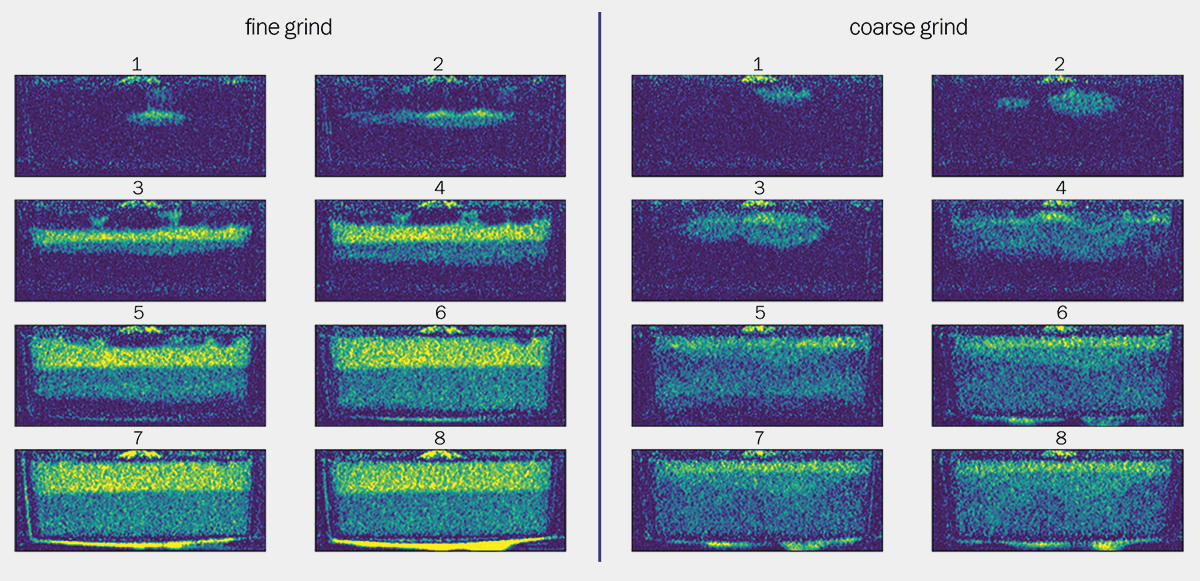

The approach taken in this study doesn’t focus on morphological features of the insects, as these are difficult to detect with radar. Instead, it uses the harmonic patterns generated by the micro-Doppler effect of an insect beating its wings as a detection strategy. Millimetre-wave radar can provide insight into biomechanical characteristics not visible with cameras, and these characteristics are encoded in the harmonic patterns of the wingbeat.

The team used machine learning to improve the accuracy of the identification and incorporated a SHAP (SHapley Additive exPlanations) analysis – an explainable AI tool that interprets and explains key outputs and prioritizes key features – to identify which signal features are the most critical for differentiating insect species. The SHAP analysed each insect across the full spectrum of micro-Doppler harmonics, extracting key features including fundamental wingbeat frequency, energy distributions, cepstral coefficients (sound signals) and how quickly an insect’s wing movement change. These data were then used to train the machine learning model.

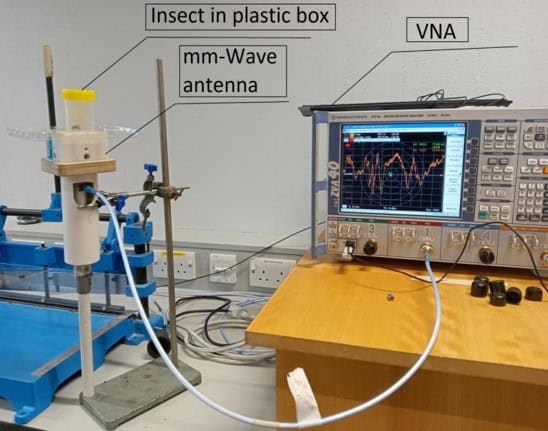

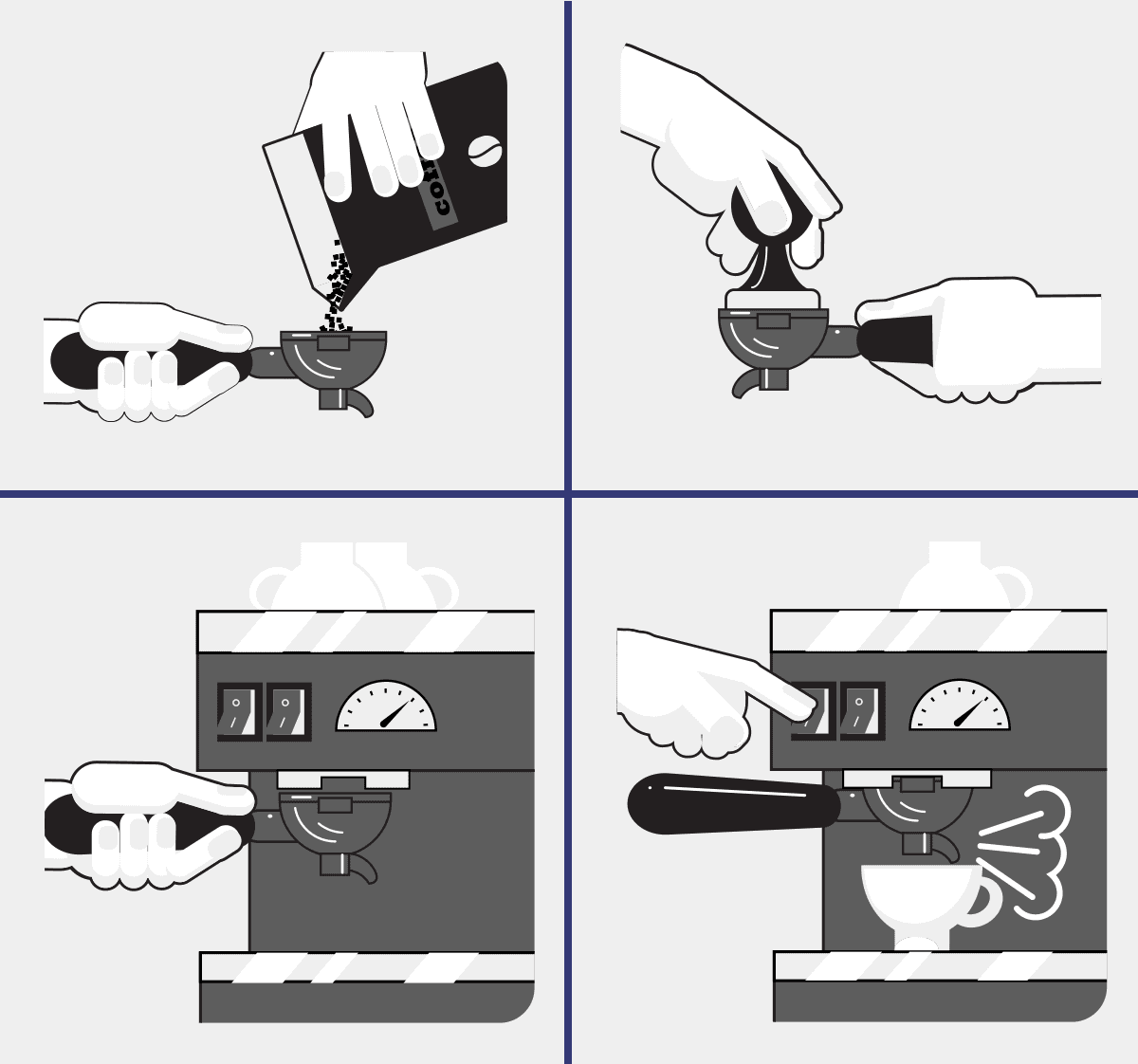

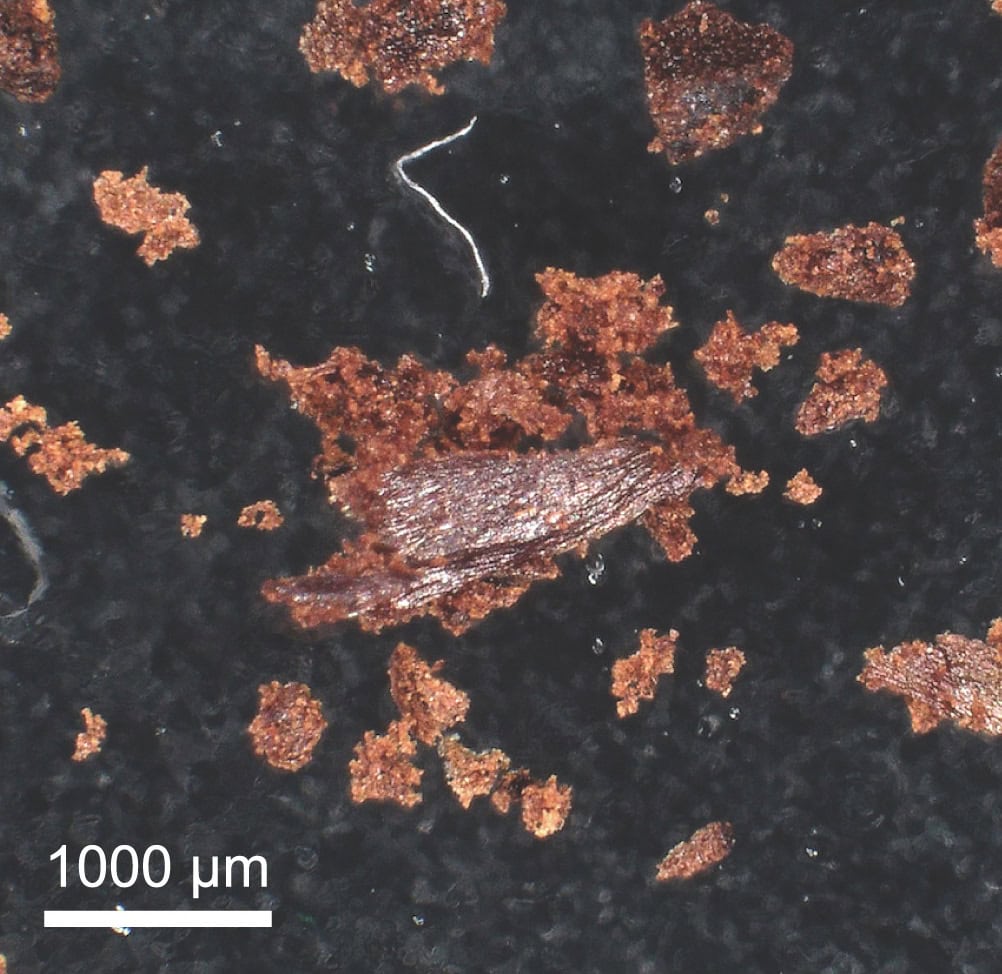

The actual process of obtaining this data from the insects involved capturing insects at the Trinity College Dublin campus and placing them in a plastic box on top of a millimetre-wave antenna that recorded their radar signatures. The researchers then released the insects back into the wild. After data capture, the relevant micro-Doppler features were extracted from the data for model training.

The model allowed non-invasive monitoring of different insects and could distinguish between bees and wasps with 96% accuracy. The model also classified five key pollinating insect species – red-tailed bumblebee, buff-tailed bumblebee, moss carder bumblebee, western honeybee and common wasp – with an accuracy of 85%.